We'll do that later in the chapter, developing an improved version of our earlier program for classifying the MNIST handwritten digits, network.py.

Understand Why Use Cross Entropy as Loss Function in Classification Problem – Deep Learning Tutorial.Understand Entropy, Cross Entropy and KL Divergence in Deep Learning – A Simple Tutorial for Beginners.Understand Softmax Function Gradient: A Beginner Guide – Deep Learning Tutorial

#Cross entropy how to

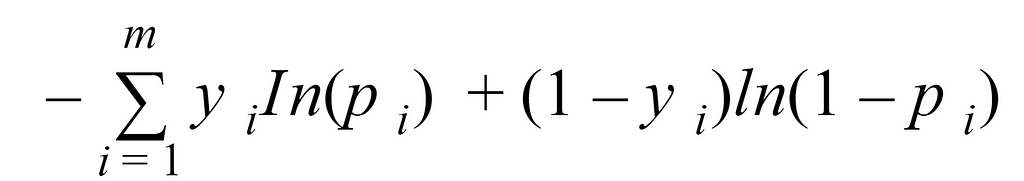

If you want to know how to compute the gradient of softmax function, you can read: The gradient of it is:Īs to example above, the gradient of cross entropy loss function is: However, as to \(o_j\), it is not a correct class. Here k is the correct class, the gradient of it is: The true probability is the true label, and the given distribution is the predicted value of the current model. Cross-entropy can be used to define a loss function in machine learning and optimization. As to loss function L, the gradient of \(o_k\) is computed as: Cross-entropy loss function and logistic regression. In this part, we will introduce how to compute the gradient of cross entropy loss function. How to compute the gradient of cross entropy loss function? 4: Architecture of the Convolutional Neural Network C. The cross entropy loss can be expressed as: J Xm i1 y i log y i Figure 4 visualizes the high-level architecture of our net-work. Where \(L\) is the cross entropy loss function, \(y_i\) is the label. cross-entropy loss, as this architecture had worked well in a homework problem of CS 230 2. Unlike Softmax loss it is independent for each vector component (class), meaning that the loss computed for every CNN output vector component is not affected by other component values.

#Cross entropy plus

We often use softmax function for classification problem, cross entropy loss function can be defined as: It is a Sigmoid activation plus a Cross-Entropy loss. In this tutorial, we will discuss the gradient of it. Cross-entropy examines the predictions of models with a truth probability distribution. It builds on the concept of data-entropy and finds the variability of bits needed to rework an occasion from one distribution to a different distribution.

For example, if we're interested in determining whether an image is best described as a landscape or as a house or as something else, then our model might accept an image as input and produce three numbers as output, each representing the probability of a single class.ĭuring training, we might put in an image of a landscape, and we hope that our model produces predictions that are close to the ground-truth class probabilities $y = (1.0, 0.0, 0.0)^T$.Cross entropy loss function is widely used in classification problem in machine learning. Proposition 1: The measures defined in Equations (1) and (2) are the simplified neutrosophic cross-entropy, and satisfy conditions (1)(3) given in. Cross-entropy may be a distinction measurement between two possible distributions for a group of given random variables or events. In this post, we'll focus on models that assume that classes are mutually exclusive. When we develop a model for probabilistic classification, we aim to map the model's inputs to probabilistic predictions, and we often train our model by incrementally adjusting the model's parameters so that our predictions get closer and closer to ground-truth probabilities.